Microsoft launches a new deep learning AI platform for Azure

Microsoft released a new Deep Learning Acceleration platform called Brainwave as part of the 2017 Hot Chips Symposium. This real-time AI platform will boost how machine learning models function.

Brainwave will have three main layers that include:

- A distributed system architecture that delivers high performance

- A Deep Neural Network (DNN) hardware which is blended on to programmable gate arrays

- A compiler and run-time for safe deployment of trained models

The Brainwave project is designed for real-time AI. That means, the system processes requests as soon as it receives them, without unwanted delays. Today, the significance of real-time AI is increasing because the cloud infrastructures process live data streams, irrespective of the form of data (can be search queries, videos, user interactions).

Brainwave is expected to provide rapid AI support. It allows cloud-based deep learning models to work across massive FPGA (Field Programmable Gate Arrays) infrastructure. This will boost performance and provides more computing power to FPGAs to handle the AI workloads. Brainwave also has a software that supports popular deep learning frameworks like Microsoft’s Cognitive Toolkit and Google’s Tensorflow. Soon, it will support other DNN platforms too.

Machine learning and deep learning algorithms see greater application in today’s systems. Attaching these to Brainwave project will significantly reduce the user waiting time for getting a response. Currently, Azure users will benefit from Brainwave. Besides, it will be indirectly made available to Bing users. Brainwave will offer industry-leading AI capabilities and superior scalability to Microsoft Azure users.

Zerone develops bespoke software solutions carefully customized for the needs of our clients. Contact our expert today for AI solutions to accelerate your business.

Related read: Microsoft Azure Takes a Robust Plunge to Expand its AI Capabilities.

We can help!

Re-imagining Outsourcing In The Post-pandemic Era

#Artificialintelligence

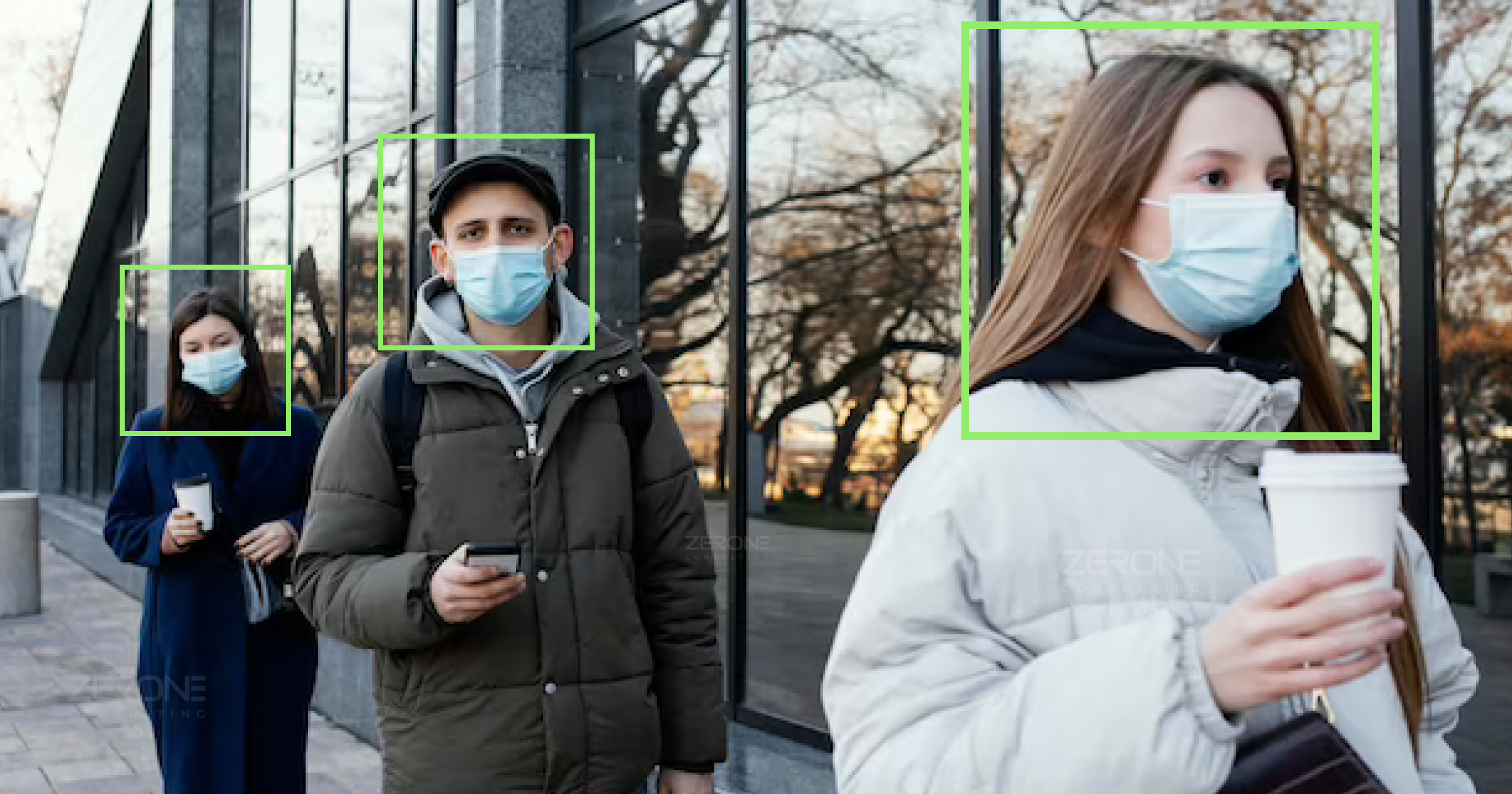

Covid-19 Effect: An Ai Tool To Ensure Social Distancing In Office Premises

#Artificialintelligence